The binding element reads configuration both at the client and server side and assembles the channel factory and the channel listener respectively. It must put the binding elements in the correct order. The recommended order is TransactionFlow, ReliableSession, Security, CompositeDuplex, OneWay, StreamSecurity, MessageEncoding and Transport.

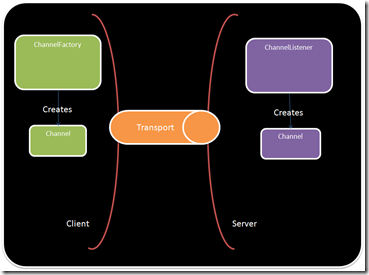

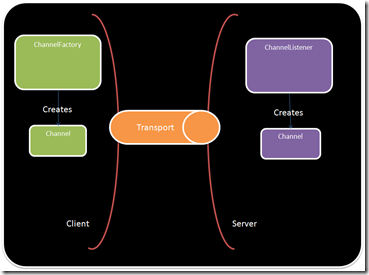

The following picture ilustrates the relationship between the ChannelListener and the ChannelFactory in WCF. The listener creates the channel at the server side and the factory at the client side. There are several interfaces for the kind of channel that is to be built. In WCF literature those are known as shapes.

One of the possible shapes is the request and reply shape. I like to think on the channel shape as the way each party in the communication sees the channel. In this example the client wants to use the channel to send requests so it is natural that the it sees the channel as a IRequestChannel. In the other hand the server gets the requests from the channel and wants to use it to send the reply back to the client and so it sees the channel as a IReplyChannel.By the way if you are looking for the method the send the reply back it is a member of the RequestContext class.

Inside the Transport Binding Element

When assembling the channel one of the first things that WCF does is checking what shapes the channel supports. It does that by calling CanBuildChannelListener and CanBuildChannelFactory.

This snippet shows the source code for a channel with Request and Reply as well as Input and Output shapes.

1: public override bool CanBuildChannelFactory<TChannel>(BindingContext context)

2: {

3: return

4: typeof(TChannel) == typeof(IOutputChannel) ||

5: typeof(TChannel) == typeof(IRequestChannel);

6: }

7:

8: public override bool CanBuildChannelListener<TChannel>(BindingContext context)

9: {

10: return

11: typeof(TChannel) == typeof(IInputChannel) ||

12: typeof(TChannel) == typeof(IReplyChannel);

13: }

At some point in the future WCF will call the factory methods to get the listener in the server side and the factory in the client side. In these methods we should validate if it is possible to assemble the factory or the listener based on the current state of the binding and them instantiate them.

1: public override IChannelFactory<TChannel> BuildChannelFactory<TChannel>(BindingContext context)

2: {

3: if (context == null)

4: {

5: throw new ArgumentNullException("context");

6: }

7:

8: if (!this.CanBuildChannelFactory<TChannel>(context))

9: {

10: throw new InvalidOperationException(string.Format("Channel Not Supported - {0}", typeof(TChannel).Name));

11: }

12:

13: if (base.ManualAddressing)

14: {

15: throw new InvalidOperationException("Manual Addressing Not Supported");

16: }

17:

18: return new CustomChannelFactory<TChannel>(this, context);

19: }

20:

21: public override IChannelListener<TChannel> BuildChannelListener<TChannel>(BindingContext context)

22: {

23: if (typeof(TChannel) == typeof(IReplyChannel))

24: {

25: return (IChannelListener<TChannel>)(new CustomReplyChannelListener(this, context));

26: }

27:

28: if (typeof(TChannel) == typeof(IInputChannel))

29: {

30: return (IChannelListener<TChannel>)(new CustomInputChannelListener(this, context));

31: }

32:

33: throw new InvalidOperationException("Unsupported channel listener.");

34: }

In the channel listener we must provide a way to accept a new channel to receive messages from clients. One importing that I notice is that at the server side it is important to implement assynchronous way to accept the channel but at the client side we can implement only the synchronous way to send messages (requests) to the server.

The OnAcceptChannel method can be called multiple times and in this case there would be several opened channels at the same time. This sample is based on the Null channel sample and in this case it would not make sense. This is wait a AutoResetEvent is used. The component requesting the second channel will block until the first component releases it or the operation expires.

If you are using multiple channels don’t forget to disconnect the event handlers on the close handler to prevent memory from leaking.

1: protected override IReplyChannel OnAcceptChannel(TimeSpan timeout)

2: {

3: if (base.State != CommunicationState.Opened)

4: {

5: throw new CommunicationObjectFaultedException(string.Format("The channel {0} is not opened", this.GetType().Name));

6: }

7:

8: if (currentChannel != null)

9: {

10: //Please revisit this as we must accept multiple channels.

11: waitChannel.WaitOne(int.MaxValue, true);

12:

13: lock (ThisLock)

14: {

15: // re-open channel

16: if (base.State == CommunicationState.Opened && currentChannel != null && currentChannel.State == CommunicationState.Closed)

17: {

18: currentChannel = new CustomReplyChannel(this, localAddress);

19: currentChannel.Closed += new EventHandler(OnCurrentChannelClosed);

20: }

21: }

22: }

23: else

24: {

25: lock (ThisLock)

26: {

27: // open channel at first time

28: currentChannel = new CustomReplyChannel(this, localAddress);

29: currentChannel.Closed += new EventHandler(OnCurrentChannelClosed);

30: int count = CustomListeners.Current.Add(filter, this);

31: }

32: }

33:

34: return currentChannel;

35: }

36:

37: protected override IAsyncResult OnBeginAcceptChannel(TimeSpan timeout, AsyncCallback callback, object state)

38: {

39: this.OnAcceptChannel(timeout);

40: return new CompletedAsyncResult(callback, state);

41: }

42:

43: protected override IReplyChannel OnEndAcceptChannel(IAsyncResult result)

44: {

45: CompletedAsyncResult.End(result);

46: return currentChannel;

47: }

In the channel factory the logic to create the channel is here. It is also import to understand the rationale behind this. The client side is creating the channel and the server side is accepting it. As you can imagine the server can reject it and throw an exception.

1: protected override TChannel OnCreateChannel(System.ServiceModel.EndpointAddress address, Uri via)

2: {

3: if (!string.Equals(address.Uri.Scheme, this.element.Scheme, StringComparison.InvariantCultureIgnoreCase))

4: {

5: throw new ArgumentException(string.Format("The scheme {0} specified in address is not supported.", address.Uri.Scheme), "remoteAddress");

6: }

7:

8: if (typeof(TChannel) == typeof(IOutputChannel))

9: {

10: return (TChannel)(object)new CustomOutputChannel(this, address, via);

11: }

12: else if (typeof(TChannel) == typeof(IRequestChannel))

13: {

14: return (TChannel)(object)new CustomRequestChannel(this, address, via);

15: }

16: else

17: {

18: throw new InvalidOperationException("Can not create channel");

19: }

20: }

In the next part we will take a look at how the channels actually work.

Have fun,

Pedro